The White House AI Framework Has 3 Things You Should Actually Care About

On March 20, the White House released its National Policy Framework for Artificial Intelligence — four pages of legislative recommendations to Congress covering everything from child safety to energy policy to workforce training. Most of the coverage has focused on the preemption fight with states. That matters, but it's not the whole story.

by Deon Metelski, CPO

On March 20, the White House released its National Policy Framework for Artificial Intelligence — four pages of legislative recommendations to Congress covering everything from child safety to energy policy to workforce training. Most of the coverage has focused on the preemption fight with states. That matters, but it's not the whole story. You should also consider the Holland & Knight analysis, the WilmerHale breakdown, and the GUARDRAILS Act to get the big picture.

Concerning the Framework, 3 things should change how you think about your AI strategy right now — even though none of this is law quite yet.

1. There will be no federal AI regulator.

Buried in the "Innovation and American AI Dominance" section is a sentence that got almost no attention: Congress should not create any new federal rulemaking body to regulate AI.

Read that again. The Administration's position is that AI oversight belongs with the agencies that already regulate your industry. FDA for clinical AI. SEC for financial AI. CMS for reimbursement-related AI. DOT for autonomous vehicles. And so on.

This position is a bigger deal than the preemption headline. If you've been waiting for a single federal AI authority to tell you what the rules are — stop waiting. It's not coming. The regulatory model for AI in the United States will be distributed, fragmented, and sector-specific for the foreseeable future. That's not a prediction; the White House just said it out loud.

What that means practically: every organization deploying AI needs a compliance posture that satisfies multiple regulators simultaneously, because there won't be a single rulebook. You'll have your industry regulator, whatever federal standard Congress eventually passes, whichever state laws survive preemption (more on that in a second), and your contractual obligations to customers and partners.

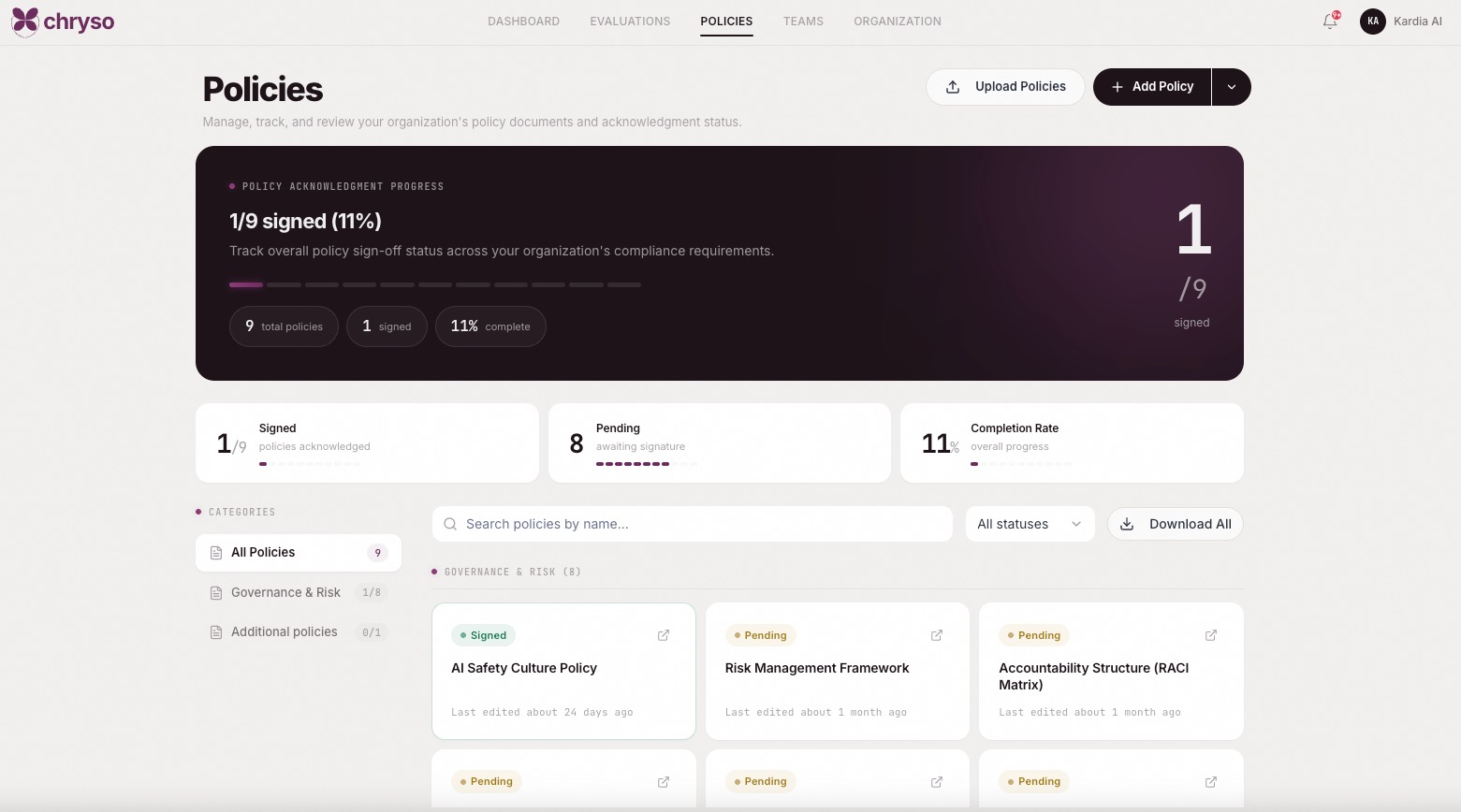

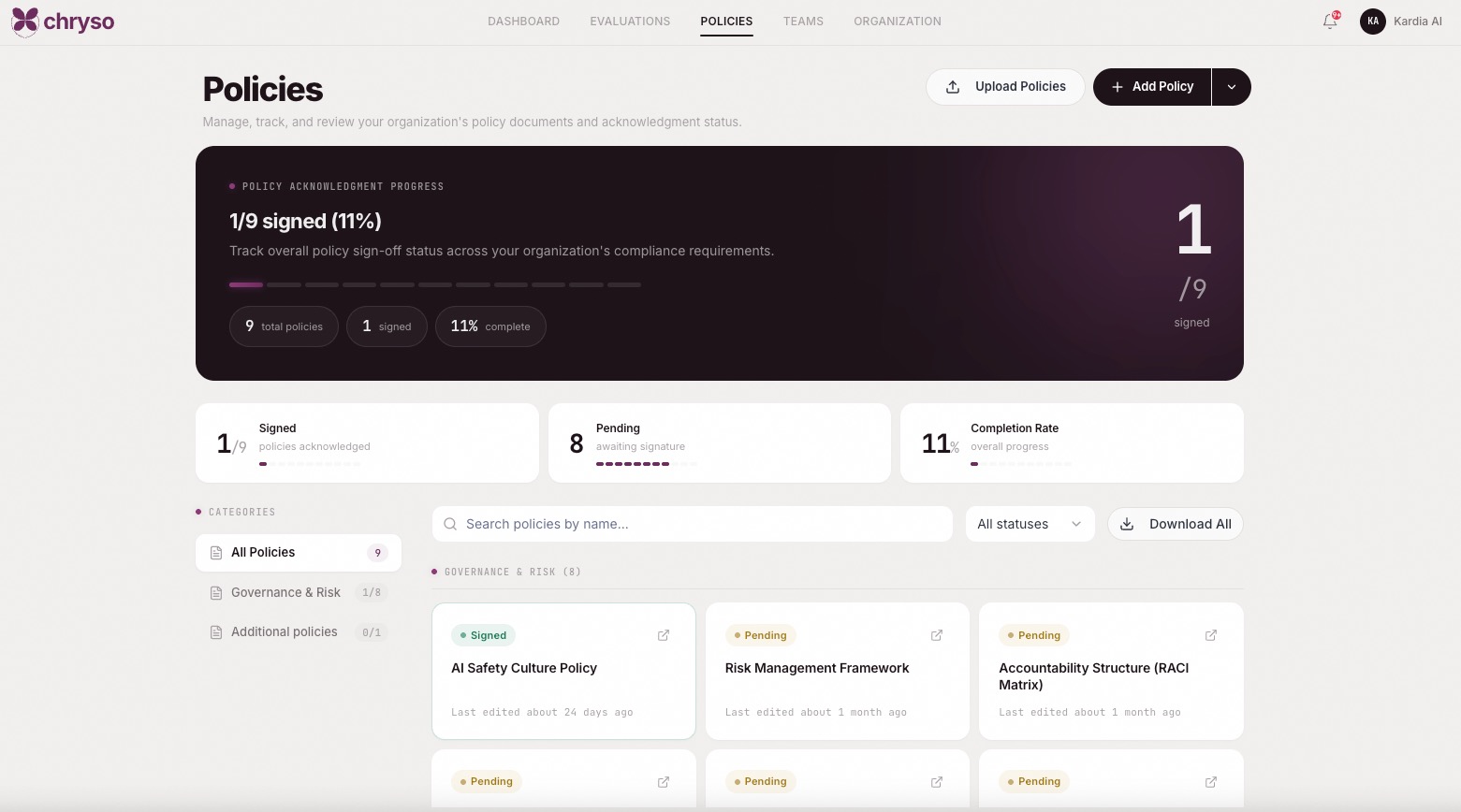

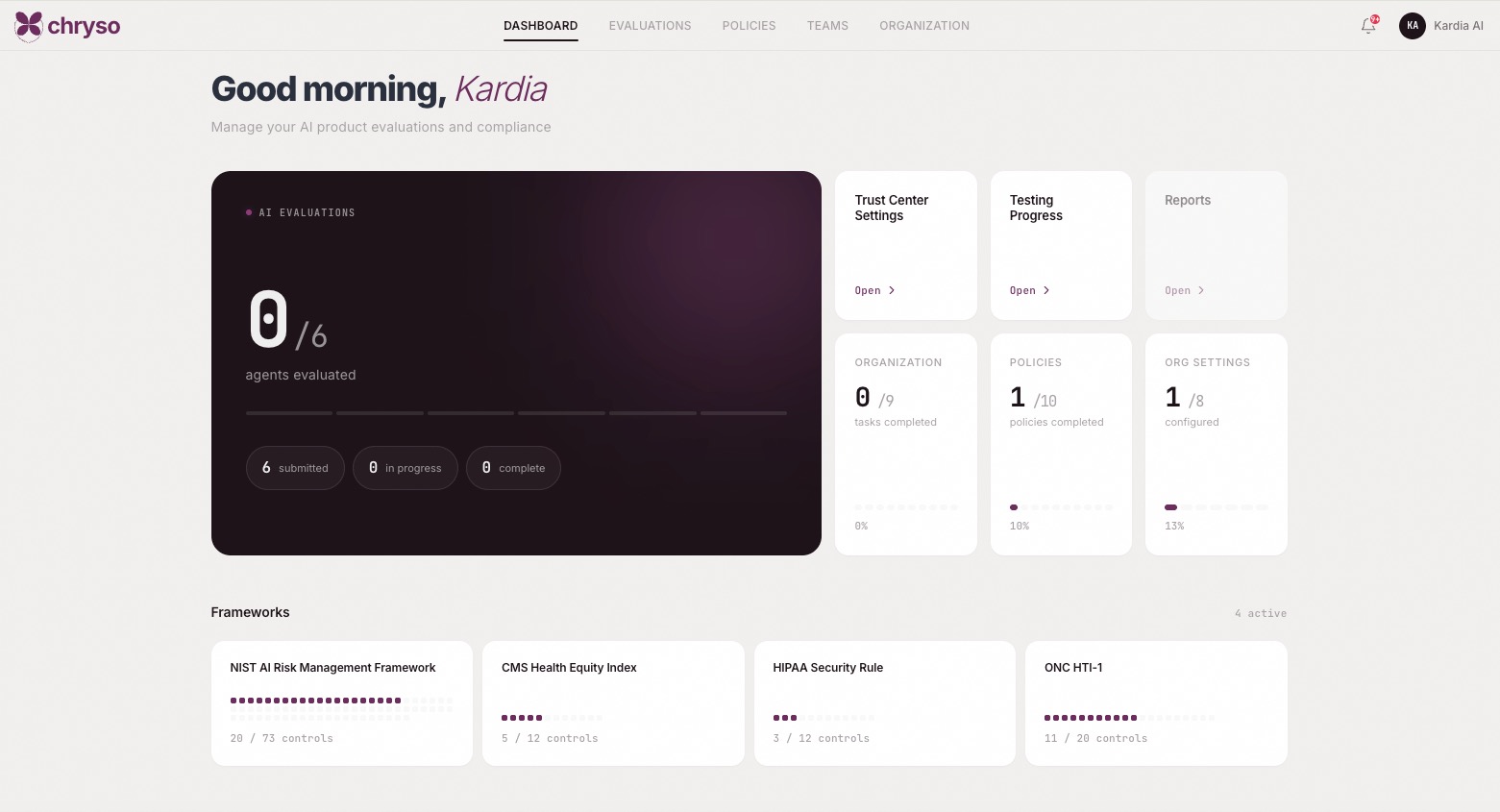

This need is why we built Chryso. One compliance platform that maps controls across NIST AI RMF, HIPAA, CMS, ONC, and applicable state AI legislation. When the Framework's recommendations become law, the mappings are updated, and when a new state law takes effect, it is added. The alternative — manually tracking every framework in spreadsheets and hoping your legal team catches the changes — doesn't scale, and the White House just confirmed you'll be doing it across multiple agencies indefinitely.

2. The preemption fight is real, but plan for the hybrid.

The Framework's preemption section says what the Administration has been saying since EO 14365 in December: states should not be permitted to regulate AI development, because it's an inherently interstate phenomenon with national security implications. One federal standard, not fifty state ones.

That's the aspiration. Here's the reality: Congress hasn't acted. The GUARDRAILS Act, introduced the same day by a bipartisan group including Reps. Beyer, Matsui, and Lieu would repeal the underlying executive order in its entirety. The Colorado AI Act is still set to take effect this year. California's automated decision-making amendments under the CCPA are already in effect. And the Framework itself carves out significant state authority — police powers, zoning, procurement, fraud prevention, child protection, and government use of AI all stay with the states.

So even in the Administration's own vision, state law doesn't disappear. It narrows. And in the more likely near-term scenario where comprehensive federal legislation stalls, we stay in the patchwork.

The organizations that do well in this environment aren't the ones betting on a single outcome. They're the ones that build governance infrastructure capable of absorbing either result. If federal preemption passes, you simplify. If it doesn't, you're already covered. If it passes with carve-outs (which is what the Framework actually recommends), you adjust the mappings and keep moving.

That's the posture Chryso is designed for. We track AI laws and standards. Whether the U.S. consolidates to a single standard or stays fragmented across 50, your evidence base stays current. You don't rebuild your compliance program every time the political winds shift.

3. Child privacy rules are coming for your training data.

The child safety section is the most detailed in the entire Framework and includes a provision with implications far beyond companies that serve kids.

Congress is being asked to affirm that existing child privacy protections — COPPA and its state equivalents — apply to AI training data. Not just to the collection of children's data, but to its downstream use in model training and targeted advertising. If data was collected from a minor, the consent and minimization requirements follow that data into the training pipeline.

This sounds like a children's issue. It's actually an enterprise data governance issue.

Think about it: most large-scale AI training datasets are assembled from broad data sources — web scrapes, transactional records, interaction logs, behavioral data. Unless you've specifically filtered for age, some percentage of that data comes from minors. If COPPA obligations attach to the use of training data, every organization that uses AI trained on those datasets has a provenance problem. Where did the training data come from? Did it include minors' data? Can you prove consent? Can your vendor?

Most organizations can't answer those questions today. The Framework says they'll need to.

This is the kind of problem that looks small until it becomes a regulatory enforcement action. And it ties directly to the IP questions we wrote about recently — the principle is the same. You need to know what went into your AI system, who it came from, and what rights govern its use. Not just for copyright reasons, but for privacy reasons that are about to get a lot more specific.

On the KORA platform, agents access knowledge bases that customers populate and control, with clear provenance on data sources. Chryso's policy templates include data governance and provenance tracking frameworks that document what feeds into an AI system's pipeline — the kind of evidence you'll need when these training-data provisions become enforceable.

Everything else in the Framework

The remaining four pillars — IP and creators, free speech, workforce development, and community infrastructure — matter, but they're either being left to the courts (IP fair use), already have bipartisan momentum (fraud prevention), or are longer-term investments (land-grant AI programs). Read them. Track them. But don't reorganize your AI strategy around them today.

The three that should change your behavior now: no central regulator means distributed compliance is permanent. Preemption is aspirational, but the patchwork is real. And training-data privacy obligations are expanding, whether your vendor is ready or not.

The organizations that navigate this well aren't the ones with the best lobbyists. They're the ones with governance infrastructure that treats regulatory change as a normal operating condition, not a crisis. That's the stance we've taken at actAVA with KORA and Chryso, and the Framework just validated the thesis.